Samuel Edusa MD

Unraveling AI Lingo: No-Blush Guide to the Terms You've Been Secretly Curious About (Part 1)

Samuel Edusa | Apr 16, 2023

Robot teaching students (created using Stable Diffusion)

Robot teaching students (created using Stable Diffusion)

Artificial intelligence (AI) can seem intimidating, but the core concepts aren't that complicated. Knowing a few terms makes it much easier to follow conversations about AI and understand what's actually going on.

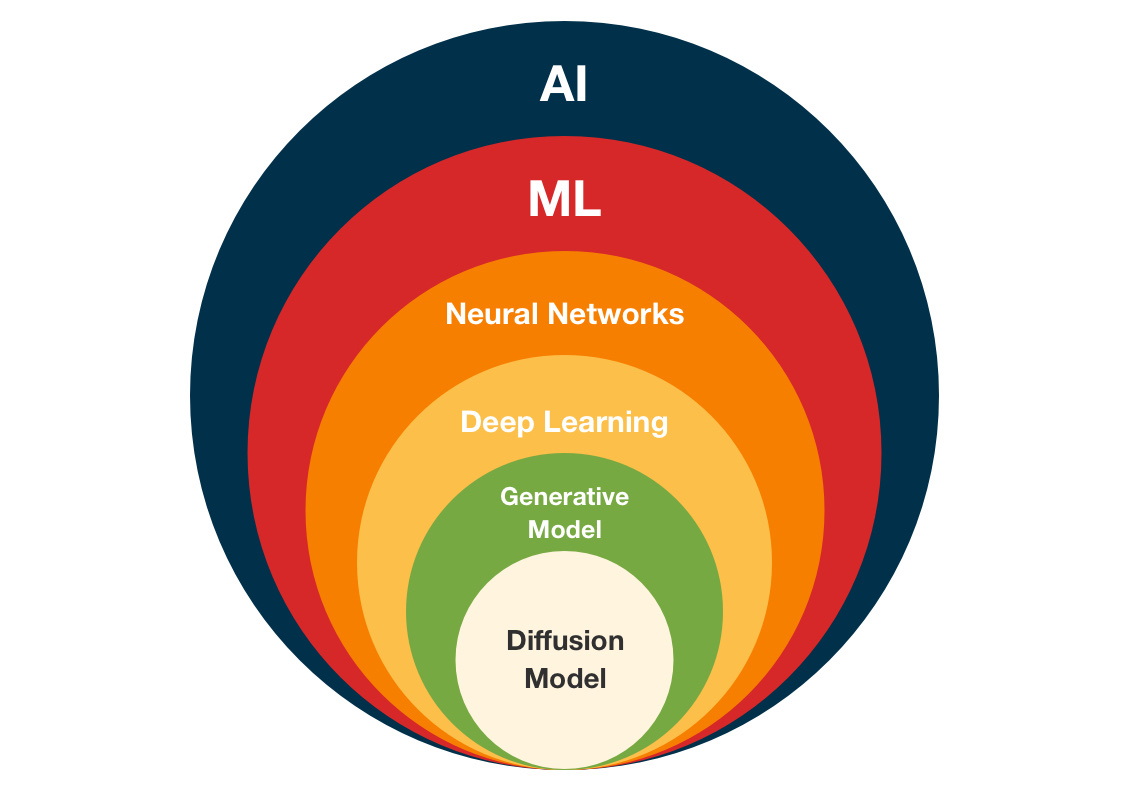

A simplified AI hierarchy diagram (created using Pixelmator Pro)

A simplified AI hierarchy diagram (created using Pixelmator Pro)

What is Artificial Intelligence?

Radiologist using AI to help review x-ray (created using Stable Diffusion)

Radiologist using AI to help review x-ray (created using Stable Diffusion)

AI is about building computer systems that can do things we'd normally need human intelligence for. The computer analyzes a situation and either takes an action or makes a prediction to achieve a goal.

For example, in medical imaging: a computer can be trained to look at X-rays or MRIs and identify patterns that suggest disease. The AI algorithm picks up on subtle anomalies in the images and flags them for a doctor to review.

One thing worth noting: people use "AI" and "machine learning" interchangeably, but they're not the same thing. Machine learning is one approach to building AI, and it's behind most of the recent progress, but AI as a concept is broader.

What is an algorithm?

An algorithm is a set of step-by-step instructions designed to accomplish a specific task. In medicine, that might mean diagnosing a condition from symptoms or predicting how well a treatment will work. Algorithms are the foundation of AI (and computing in general). Right now, most AI applications in medicine use machine learning-based algorithms.

What is Machine Learning (ML)?

Illustration of a machine [robot] learning (created using Stable Diffusion)

Illustration of a machine [robot] learning (created using Stable Diffusion)

A machine learning model is a combination of data and methods that takes an input and produces an output.

For example, a diagnostic model might take patient symptoms and lab results as input and output a prediction about what disease the patient has. The model is basically a recipe: here's how to process this input to get that output.

Think of it like a pharmaceutical production line. Raw materials go in, finished medication comes out. The model defines the steps (measure, mix, compress, coat) and the parameters for each step (compress at 1000 psi for 5 minutes).

The key difference between machine learning and other AI approaches: in machine learning, humans don't directly program the rules. Instead, humans set up the process, provide training data (more on this below), and let the model learn on its own.

When a model like Stable Diffusion or GPT3 gets released, what you're getting is the result of running machine learning algorithms on training data. The model contains all the learned rules and values needed to process new inputs.

What is training data?

Training data is a collection of examples, each with two parts:

- Input

- Expected output

For medical imaging, this might be thousands of X-ray images, each labeled with the correct diagnosis. To build a model that identifies lung cancer in chest X-rays, you'd gather images of both cancerous and non-cancerous lungs, label them, and feed them to the model during training (explained below).

What is training in machine learning?

Training is the process of feeding labeled data into the model, checking how well the output matches what was expected, and adjusting the model's internal settings to do better next time. These internal settings are called weights or parameters (more on those below). The model repeats this cycle over and over, adjusting parameters each time to get closer to its goal.

Illustration of one robot training another robot (created using Stable Diffusion)

Illustration of one robot training another robot (created using Stable Diffusion)

Say our goal is to correctly identify 99% of pneumonia cases in chest X-rays. We set up our model and run the training data through it. The model predicts which images show pneumonia and which don't. We use a simple metric to score it: correct pneumonia identifications divided by total pneumonia images. Suppose it gets 50% right on the first pass.

The model then adjusts its parameters and tries again. This time it gets 60%. Better. No human told it how to improve. The machine figured out which adjustments helped and made them on its own.

This cycle repeats until the model hits 99% accuracy. At that point, we call it trained. You can now feed it a new X-ray image, and it will tell you whether it shows pneumonia with 99% accuracy.

What is a parameter?

Parameters (also called weights and biases) are the internal values in a model that can be adjusted to change its behavior.

Think of a model as a medical device. Parameters are like the device's dials and settings that get tuned to do a specific job, like accurately reading vital signs. Developers don't set these values manually. The model learns them during training.

What is a neural network?

There are many machine learning training techniques, but neural networks are one of the most common. They're loosely inspired by the human brain and how biological neurons communicate.

A neural network has layers: an input layer, one or more hidden layers, and an output layer. Each node (artificial neuron) connects to every node in the next layer and has its own weight and bias.

An artistic illustration of a neural network (created using Stable Diffusion)

An artistic illustration of a neural network (created using Stable Diffusion)

During training, the parameters in each neuron get adjusted to improve performance on the target task.

Here's how it flows: if a neuron's output exceeds a certain threshold, it fires and passes data to the next layer. If not, nothing gets passed along. Each layer takes the weighted sum of inputs, runs it through an activation function, and decides whether to pass the signal forward.

More layers means more parameters, which lets the model handle more complex patterns and tasks.

What is deep learning?

Deep learning uses neural networks with many layers (hence "deep"). These multi-layered networks can detect complex patterns in large datasets and use those patterns to make predictions or automate tasks. Most of the recent AI breakthroughs are powered by deep learning.

What is a generative model?

A generative model learns from existing data and uses what it learned to create new data. It's commonly used to generate new images or videos.

There are several types: Generative Adversarial Networks (GANs), Variational Autoencoders (VAEs), Flow-based models, and Diffusion models. Diffusion models have gotten the most attention lately. For a medical example: researchers have used diffusion models to generate realistic 3D models of human organs from existing medical images.

Realistic 3D model of a human heart (created using Stable Diffusion)

Realistic 3D model of a human heart (created using Stable Diffusion)

This helps surgeons plan procedures and gives medical students better tools for studying anatomy.

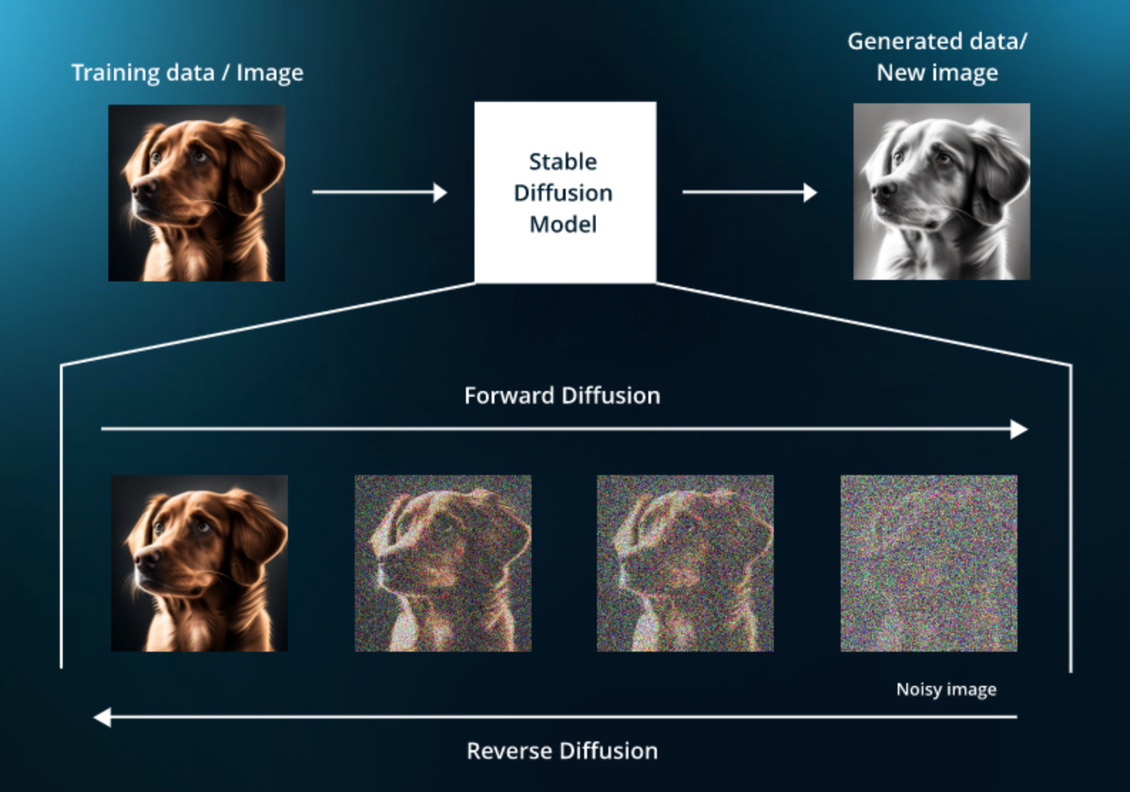

What is a diffusion model?

Diffusion models are a specific type of generative model. They work by gradually adding noise to training data until it's completely scrambled, then learning to reverse that process to recover the original data.

Once trained, you can feed the model random noise and a description of what you want, and it generates new data matching that description.

Explaining how diffusion models work (Source: https://www.leewayhertz.com/how-to-train-a-diffusion-model/)

Explaining how diffusion models work (Source: https://www.leewayhertz.com/how-to-train-a-diffusion-model/)

Text-to-image models are one example. In medicine, you could type "human heart with an enlarged left ventricle" and the model would generate an image of that. This could help doctors visualize conditions and aid in diagnosis.

If you want to gain a greater understanding of diffusion models (with lots of math) checkout this link: diffusion model clearly explained

What is GPT3?

Putting it together with what we've covered:

- GPT3 is an AI system that generates text.

- It's a generative model, creating new text based on patterns learned from training data.

- It uses deep learning with multilayered neural networks.

- It's general-purpose. You can use it for writing, summarization, code generation, and more without retraining it for each task.

What makes GPT3 interesting is that you don't need a separate model for each task. The same model can write a blog post, answer a question, or generate code. Researchers and developers use it for all sorts of things without fine-tuning.

What is Stable Diffusion?

The description given by Wikipedia is:

‘Stable Diffusion is a deep learning, text-to-image model released in 2022. It is primarily used to generate detailed images conditioned on text descriptions, though it can also be applied to other tasks such as inpainting, outpainting, and generating image-to-image translations guided by a text prompt.’

- Stable Diffusion is an AI that turns text descriptions into images.

- It uses machine learning: instead of being explicitly programmed, it learned from massive amounts of training data.

- Under the hood, it's a deep neural network with many layers of nodes that process inputs and pass results forward.

- It's a generative model, meaning it creates new content from what it learned.

- Specifically, it's a diffusion model: it generates images by starting with noise and gradually shaping it based on your text prompt.

Now you know the basics. Go confuse your friends with your new vocabulary.

References

- A crash course in AI terms - https://mythicalai.substack.com/p/a-crash-course-in-ai-terms-machine

- Diffusion model clearly explained - https://medium.com/@steinsfu/diffusion-model-clearly-explained-cd331bd41166